Cognitive biases are not like apps you can uninstall. They are properties of our neural hardware.

You Can’t Delete Bias, But You Can Retrain the Reflex

However, like a reflexive flinch, they can be modulated. You may not stop the reflex from firing, but you can:

- Recognize it earlier,

- Put space between impulse and action,

- And design environments that make the biased move less likely.

This guide walks through concrete, research-grounded strategies for doing exactly that.

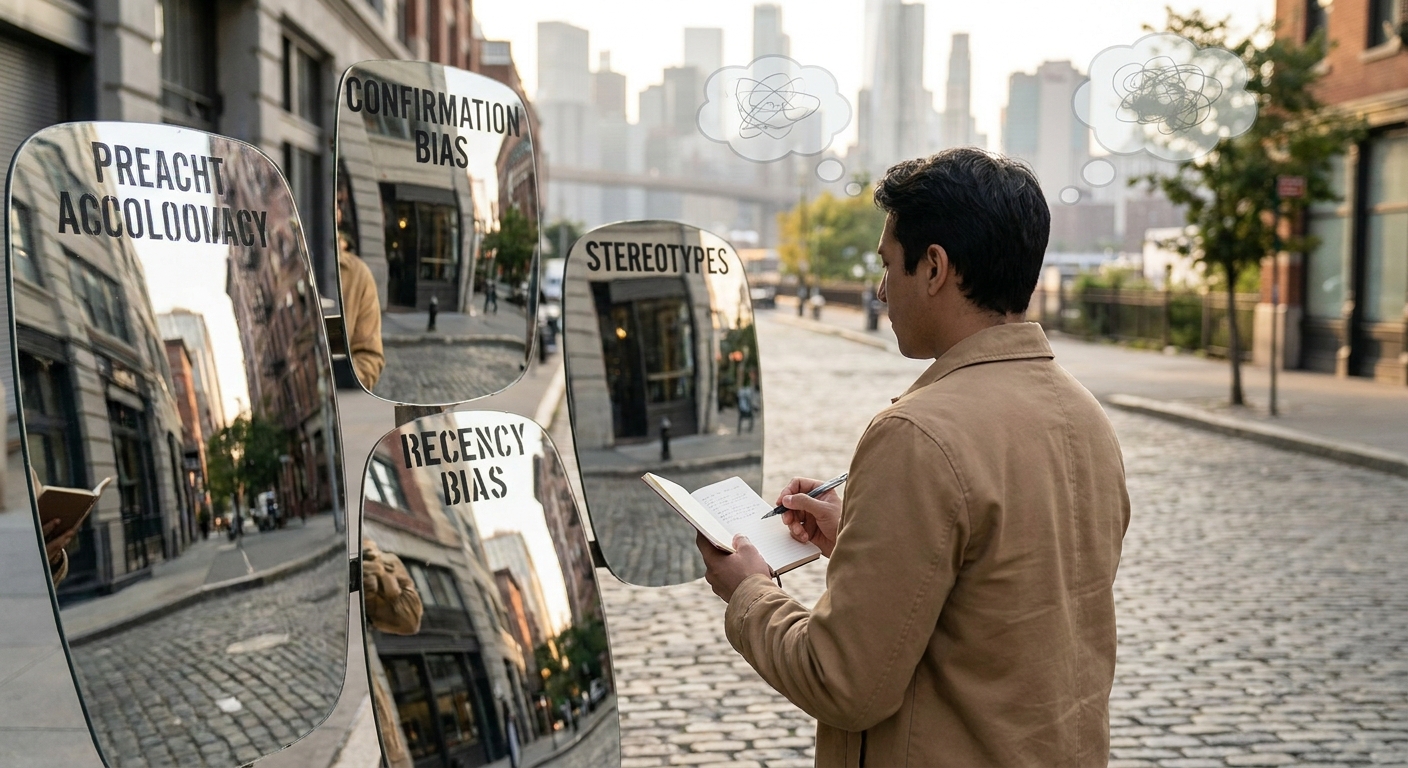

Step 1: Move from “Bias Awareness” to “Bias Detection”

Most of us are bias-aware: we know terms like confirmation bias and sunk cost fallacy.

Fewer of us are bias-detecting: able to catch biases in real time as we think.

Practice: The Daily Bias Journal

For 10 minutes at the end of the day, reflect on:

- One decision you made,

- One strong opinion you expressed,

- One time you felt unusually certain or emotional.

For each, ask:

“What was my first instinct?”

“If a bias were involved, which one would it be?”

You don’t have to be correct. The purpose is to build a habit of suspecting bias.

Why it works: Research on metacognition (thinking about thinking) suggests that simply labeling mental states changes them. Naming a potential bias shifts you from actor to observer.

Step 2: Use Implementation Intentions—If/Then Plans for Your Mind

Psychologist Peter Gollwitzer’s work on implementation intentions shows that “If X, then I will do Y” plans are disproportionately powerful.

You can apply this to biases:

- If I am about to make a high-stakes decision,

then I will seek one piece of disconfirming evidence.

- If I feel thrilled about a financial opportunity,

then I will wait 24 hours before acting.

How to Design Effective If/Then Anti-Bias Plans

- Identify a trigger: a feeling (excitement, anger), a situation (negotiation, social media), or a behavior (clicking “buy now”).

- Attach a micro-action: something you can do in under two minutes (write down base rates, text a skeptical friend, re-read criteria).

Example:

- Bias target: Confirmation bias.

- Trigger: Feeling satisfied after reading an article that fully agrees with you.

- If/Then Plan: “If I feel smugly validated by something I read, then I will search for ‘[topic] critique’ and skim at least one opposing view.”

Step 3: Build Structural Protections into Recurring Decisions

Some biases are stubborn precisely because they appear in the same contexts again and again.

Instead of relying on willpower, change the choice architecture.

3.1. Countering Overconfidence and Planning Fallacy

Tool: Reference class forecasting checklist

For any project longer than one week, answer:

What is the most similar project I’ve completed?

How long did that actually take (not how long I planned)?

What unexpected obstacles occurred then? Are any likely to repeat?

Given that, what is a realistic range for this project’s timeline?

Research shows that forcing yourself to look outward—to a “reference class” of similar cases—improves forecasting accuracy compared to thinking only about the unique features of your current project.

3.2. Countering Sunk Cost and Status Quo Bias

Tool: Pre-scheduled “exit audits”

For subscriptions, long-term projects, or routines, put a recurring event on your calendar every 3–6 months labeled: “Keep, Change, or Cancel?”

At that moment, ask:

- “If I weren’t already doing this, would I start now?”

By deciding deliberately at set points, you reduce the stickiness of default choices.

Step 4: Use Social Design—Other Brains as Mirrors

Biases are often easier to see from the outside.

4.1. The Red Team / Blue Team Method

Borrowed from cybersecurity and military planning:

- Blue team: Proposes the main plan or belief.

- Red team: Actively tries to find flaws, alternatives, and failure modes.

You can implement a lightweight version in personal life:

- Share your plan with a trusted friend and explicitly ask them to play red team.

- Give them permission to be blunt. Your rule: no defensiveness during the session; you can decide what to accept afterward.

Evidence: Studies on group decision-making (e.g., Charlan Nemeth’s work) suggest that genuine dissent—not just polite devil’s advocacy—improves creativity and accuracy.

4.2. The “Challenge Network”

Organizational psychologist Adam Grant talks about building a “challenge network”: a small group of people who are both supportive and unafraid to criticize your ideas.

Action step:

- List 3–5 people in your life who are:

- Honest,

- Thoughtful,

- Less likely to share your blind spots.

- Tell them explicitly: “I value your skepticism. When you think I’m missing something, I want you to say so.”

This social contract helps ensure you’re not surrounded only by validators.

Step 5: Train with Predictions, Not Just Opinions

Biases often sneak in through overconfidence. You feel certain but never test that feeling quantitatively.

5.1. The 30-Day Calibration Experiment

For 30 days, make one small prediction per day. Example domains:

- Work (e.g., “Will client X answer by Friday?”)

- Personal (e.g., “Will I exercise 3 times this week?”)

- World events (within reason)

- The event,

- Your confidence (in %),

- The outcome.

- When you said 70%, did events happen ~70% of the time?

- Or are you systematically over- or under-confident?

For each prediction, record:

At the end, review:

This builds calibration—matching confidence to reality—which is foundational to reducing many biases.

Evidence: Work by Philip Tetlock on “superforecasters” shows that individuals who practice frequent, scored predictions improve not just their accuracy but their reasoning quality over time.

Step 6: Leverage Emotion Instead of Fighting It

Trying to reason against strong emotion often backfires. A better move is to use emotion as a signal.

6.1. The Emotion Flag Technique

When you notice intense emotion:

- Label it: “I feel angry / excited / anxious.”

- Ask: “What decision am I about to make under this state?”

Research on affect labeling (putting feelings into words) shows that simply naming emotions reduces amygdala activation and increases prefrontal control. This can create just enough distance to reconsider.

6.2. Pair Emotions with Pre-Commitment

Anticipate emotional states:

- When calm, decide: “If I feel furious during an online debate, I will close the app until tomorrow.”

- Or: “If I feel euphoric about an investment idea, I will explain it in writing to a skeptical friend before acting.”

This harnesses System 2 planning to constrain System 1 reactions.

Step 7: Accept Imperfection, Focus on Trajectory

You will never be bias-free. You can, however, be less wrong over time.

Meaningful progress looks like:

- Shorter lag between biased thought and recognition (“Oh, that’s my confirmation bias again”).

- Smaller stakes attached to biased decisions (you keep bias out of your biggest choices more often).

- More systematic learning from mistakes (because hindsight bias is slightly weaker).

A Simple Monthly Review

Once a month, ask yourself:

“What decision went poorly this month?”

“If I had to blame a bias, which one would it be?”

“What micro-change could I adopt to reduce that bias next time?”

Write down just one actionable tweak. Implement it the following month. This slow, iterative loop is how you genuinely retrain your reflexes.

The Mindset Shift That Makes All of This Work

Instead of viewing biases as embarrassing flaws, treat them as testable hypotheses about your own thinking.

- Hypothesis: “When I’m very confident about timelines, I underestimate by 50%.”

- Experiment: Track time for three projects and compare.

- Update: Adjust future estimates accordingly.

This scientific attitude, applied inward, turns cognitive bias from a source of shame into a source of fascination—and gradual, compounding improvement.

You can’t stop your brain from being human. But you can become the kind of human whose mind is not just thinking, but quietly, persistently, learning how it thinks.